Microsoft just introduced Phi-3-mini, a lightweight AI language model that offers cost-effective operation compared to larger models like GPT-4 Turbo. Its small size allows local deployment to bring similar AI capabilities to smartphones offline potentially. The model size is typically measured by parameter count, encoding the model’s knowledge for text generation.

Small language models like Microsoft’s Phi-3-mini represent a significant shift towards more sustainable AI. Unlike the resource-heavy larger models, Phi-3-mini’s 3.8 billion parameters make it efficient enough to run on everyday devices like smartphones, democratizing AI use and enhancing privacy.

It achieves high performance by using a carefully optimized dataset, pushing the boundaries of what compact models can do and paving the way for broader AI integration in daily life, from language translation to digital assistance.

What is Phi-3-mini?

Phi-3-mini stands as a remarkable example of Microsoft’s innovative strides in the field of artificial intelligence. This compact yet highly capable language model is designed to deliver robust AI capabilities directly on your devices, bypassing the heavy computational demand typically associated with larger models.

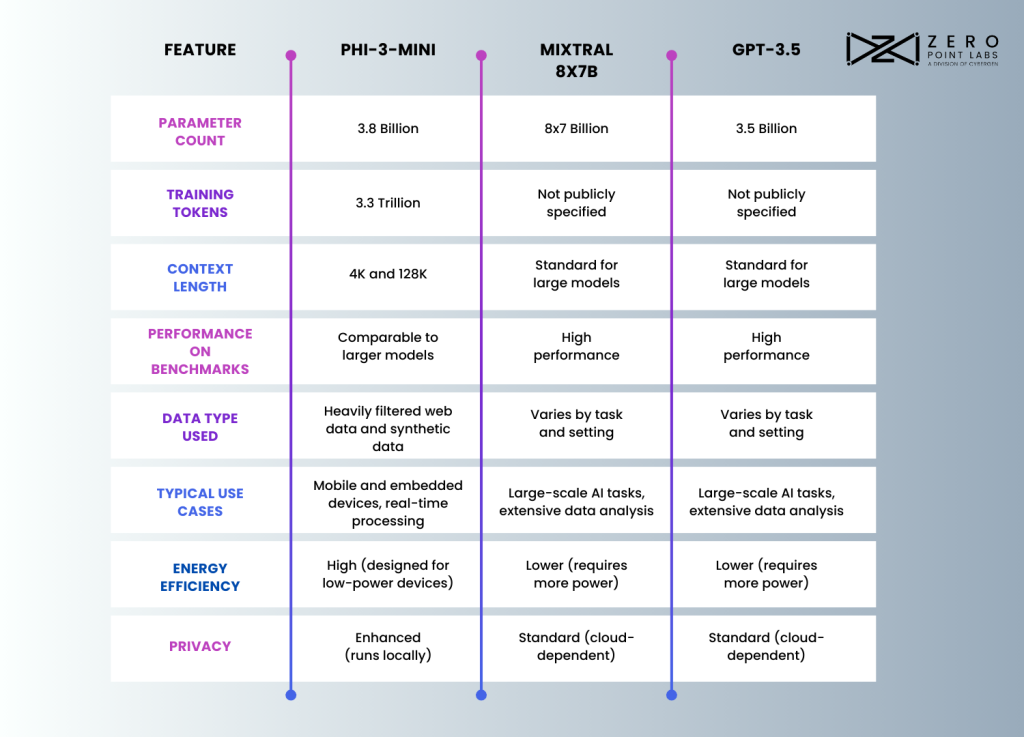

Key Specifications: Phi-3-mini is built with 3.8 billion parameters, a size that balances complexity and efficiency, making it nimble enough for local use on consumer devices. It has been trained on an impressive 3.3 trillion tokens, ensuring a depth of learning and understanding that rivals more substantial models.

Technical Innovations

Phi-3-mini is distinguished by its advanced transformer decoder architecture that enables high-level language processing on compact devices. This architecture excels in maintaining context over large text segments that are crucial for tasks that demand nuanced understanding and coherent text generation.

The model offers two context lengths: 4K for rapid response applications like chatbots, and 128K for deep content analysis required in document summarization. This versatility allows users to tailor the AI’s performance to specific tasks.

Furthermore, Phi-3-mini is compatible with Llama-2 model packages and uses a shared tokenizer to simplify integration into existing systems and ensure consistency across different AI applications. These features collectively enhance Phi-3-mini’s utility and accessibility, making it a robust tool for developers.

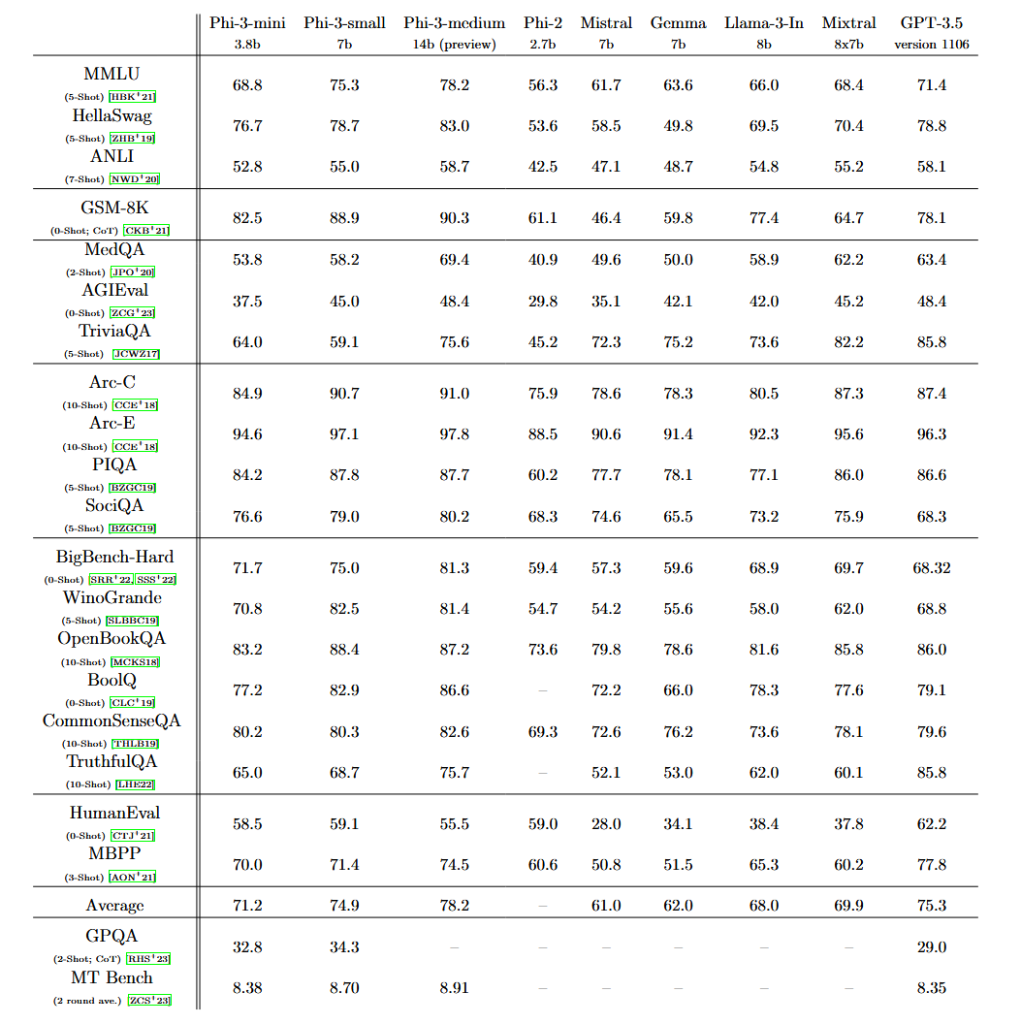

Phi-3-mini Benchmark Chart

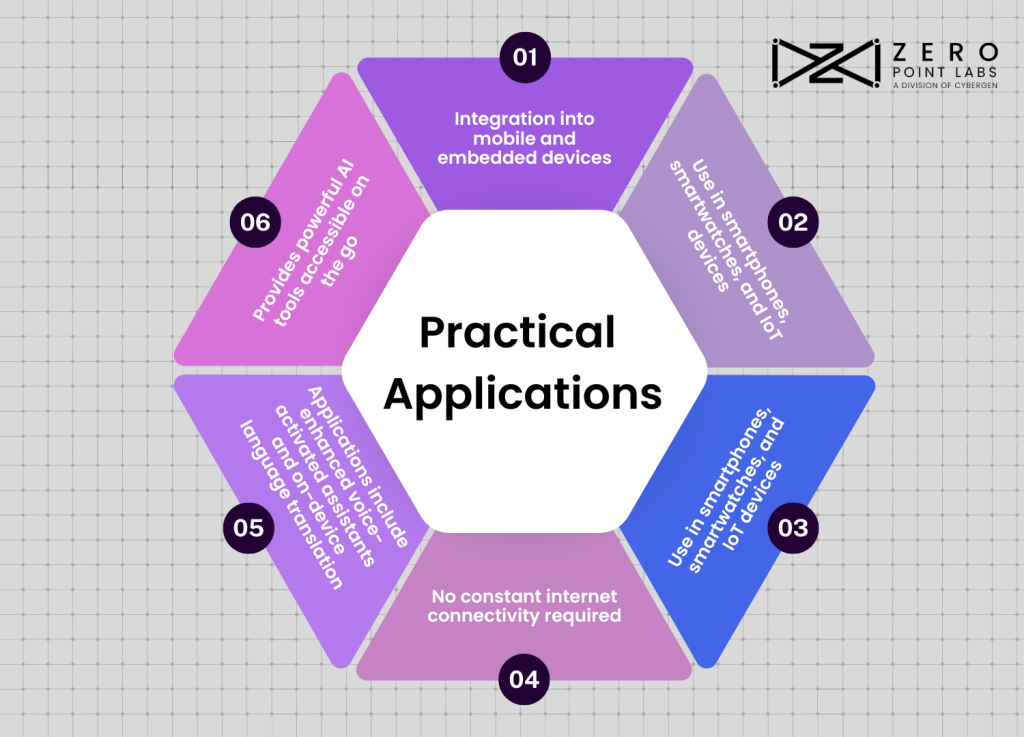

Practical Applications of Phi-3-mini

Phi-3-mini’s design not only advances AI technology but also widens its real-world applicability, particularly in mobile and embedded devices.

Phi-3-mini Limitations

Safety and Ethical Considerations

- Microsoft prioritizes safety and ethics in Phi-3-mini’s development, implementing robust measures for responsible deployment.

- Phi-3-mini incorporates advanced data filtering to avoid biased or inappropriate content during training.

- Rigorous testing protocols identify and address potential vulnerabilities, enhancing reliability and safeguarding against misuse.

- Red-teaming ensures Phi-3-mini’s responses are free from harmful or biased content, refining the model to meet ethical standards.

- These measures underscore Microsoft’s commitment to ethical AI development, establishing Phi-3-mini as a trustworthy and responsible technological advancement.

Future Directions

Phi-3-mini’s journey is just the beginning and Microsoft is continually enhancing its capabilities and expanding its applications. The ongoing improvements focus on boosting computational efficiency and accuracy to ensure the model remains cutting-edge and reliable.

Future updates may also enhance energy efficiency to make Phi-3-mini ideal for mobile and embedded devices.

Phi-3-mini exciting potential features include multilingual support, breaking language barriers, and expanded context handling for more complex tasks.

These advancements promise to not only enhance Phi-3-mini but also contribute to broader AI advancements to bring sophisticated capabilities to everyday technology.